Galatea

Large Language Models, Part I

“Man, Who Knows Themself?” 2017. Ink on (discarded) poly/cotton tablecloth. Installation view, solo exhibition “Apocrypha” at Knulp in 2017.

"Once home, he went straight to the replica of his sweetheart,

threw himself down on the couch and repeatedly kissed her;

she seemed to grow warm and so he repeated the action,

kissing her lips and exciting her breasts with both hands.

Aroused, the ivory softened and, losing its stiffness,

yielded, submitting to his caress as wax softens

when it is warmed by the sun, and handled by fingers,

takes on many forms, and by being used, becomes useful."

-Ovid, Metamorphoses, 350-3601

Throughout human history our technologies have changed us. Through all the discussions about people finding god in the machine, and inevitable explanation that technology has always inspired this kind of projection,2 what has always seemed to be missing from the discourse, for me at least, is the most fundamental of our technologies. Writing rewired our brains, for example, such that we remember less than those in oral cultures. (Usually the cost/benefit ratio comes out as basically neutral, one loses some capacities and gains others most beautifully explored in Jesuit historian Walter J. Ong’s Orality and Literacy).3 Now we rarely think of the written word as a technology, let alone language itself, and yet everything we seem to consider “hi tech” or an efficiency still pertains to "communications". Amidst all the highs and lows of the seemingly endless articles on Artificial Intelligence (AI) and Artificial General Intelligence (AGI),4 is the completely hilarious fact that in this incredibly short history of this field, the computer scientists involved presume to understand what consciousness is, a question which has eluded the greatest minds humanity has ever produced. Mechanical engineer and journalist Karen Hao explains in her excellent new book Empire of AI that: "Throughout history, neuroscientists, biologists, and psychologists have all come up with varying explanations for what it is and why it seems that humans have more of it than any other species."5 The sciences would have seemed to have triumphed even to the end of a very capable writer only crediting the efforts of " neuroscientists, biologists, and psychologists" in the understanding of: consciousness, what it is to be human, what language is, what intelligence is, etc. These would appear to be the relevant parameters for the construction of a new consciousness to those hell-bent on surpassing humanity (or suppressing it even to the end of mass-murder, both active and passive). And these imperialists who are still in charge, seem to generally be the ones to advance the notion of Western Civilisation, which is literally coded into these technologies that have continually proven to be racist; seemingly while ignoring 4000 years of the history of Western thought. For Hao's thesis, as I understand it, is that the spread of AI, particularly in terms of political and economic reach, is basically reproducing some of the worst effects of global imperialism, particularly through the efforts of the company OpenAI, which is responsible for Chat GPT. The word "hubris" really doesn't get thrown around enough these days.

Though, the more intelligent among the tech lords do seem to understand what is basically at stake. Even the nefarious billionaire attempting to aggregate all data towards an all-encompassing surveillance state with the company Palantir, Peter Thiel, has admitted that it is maths comprehension and not the humanities that is likely to be replaced by computation.6 This has been all but confirmed in a recent spate of reports about the comparatively high unemployment rate of recent computer science graduates, whose jobs doing low level coding are the first to be successfully automated7 or the report MIT released on Thursday which confirms that 95% of businesses have seen no return on their investment in generative AI8. It turns out that perhaps the jobs computer scientists best understood how to reproduce with non-human actors were their own. This information is being posted alongside the rather (unintentionally) hilarious article by "former contributor" Victoria Feng in Forbes Magazine about the inevitability of (actually realised) AI wiping out all human life, as described by computer science dropouts (who must surely know something more than the rest of us)... And who have gone to secure themselves positions preventing the worst effects of AI (somewhat conveniently considering very few understand this opaque but basically simply aggregative technology). It is hard not to see something cynical in these pragmatists who chose the most “future proof” degree only to have recently found it backfire. Quoth Feng:

"Physics and computer science major Adam Kaufman left Harvard University last fall to work full-time at Redwood Research, a nonprofit examining deceptive AI systems that could act against human interests.

“I’m quite worried about the risks and think that the most important thing to work on is mitigating them,” said Kaufman. “Somewhat more selfishly, I just think it’s really interesting. I work with the smartest people I’ve ever met on super important problems.”

He’s not alone. His brother, roommate and girlfriend have also taken leave from Harvard for similar reasons. The three of them currently work for OpenAI."9

Laugh cry emoji.

As a practicing Luddite, I have been playing catch-up with this particular discipline and will have more to write on my immediate reservations after I fully get through more of the materials, particularly the work of the most famous skeptic of the "connectionist" methodology of building "AGI", Gary Marcus who is described as a "psycholinguist" (fuck me). But I do struggle a bit in reading these texts of people who have literally trained to automate communication but don't immediately impress as having a rudimentary understanding of the humanities. There is a quite fascinating case study in Hao's book about how a very basic program designed to have a conversation simply by parroting what was said to it, was so psychologically convincing that simply from the apparent capacity for speech that intelligence was immediately attributed by its users:

"(Joseph) Weizenbaum designed the system as an experiment to see how easily humans might fall for an illusion of intelligence. ELIZA’s namesake was Eliza Doolittle, a fictional working-class flower girl portrayed by Audrey Hepburn in the 1956 film My Fair Lady, who learns to pass as a duchess in high society after a wealthy man teaches her to change her diction and manners. ELIZA’s subsequent success in fooling people into believing it to be intelligent alarmed Weizenbaum. In fact, the demonstration felt so convincing to some that psychiatrists began to speak of automated psychotherapy as just around the corner, and merely a few years after the founding of the AI field, computer scientists were already prematurely concluding that natural language understanding in computers was a solved problem. (Decades later, whether or not it’s even been solved today is still an open debate.)"10

What immediately struck me about this paragraph is the reference to the Eliza Doolittle of the Audrey Hepburn film. This was, of course, and much more appropriately, based on the George Bernard Shaw play entitled "Pygmalion", after the story in Ovid's Metamorphoses (quoted in the passage at the beginning of this piece), of a sculptor who falls in love with his creation, who is then animated by the goddess Aphrodite. Shaw is, of course, being ironic in describing the "civilising" attempts of the protagonist Henry Higgins, apparently meaning to create a woman in his own image, literally turning the apparently subhuman lower-class woman into someone he would consider human. I know I have written it too many times, but I think that the humanities might actually be quite important for understanding humanity.

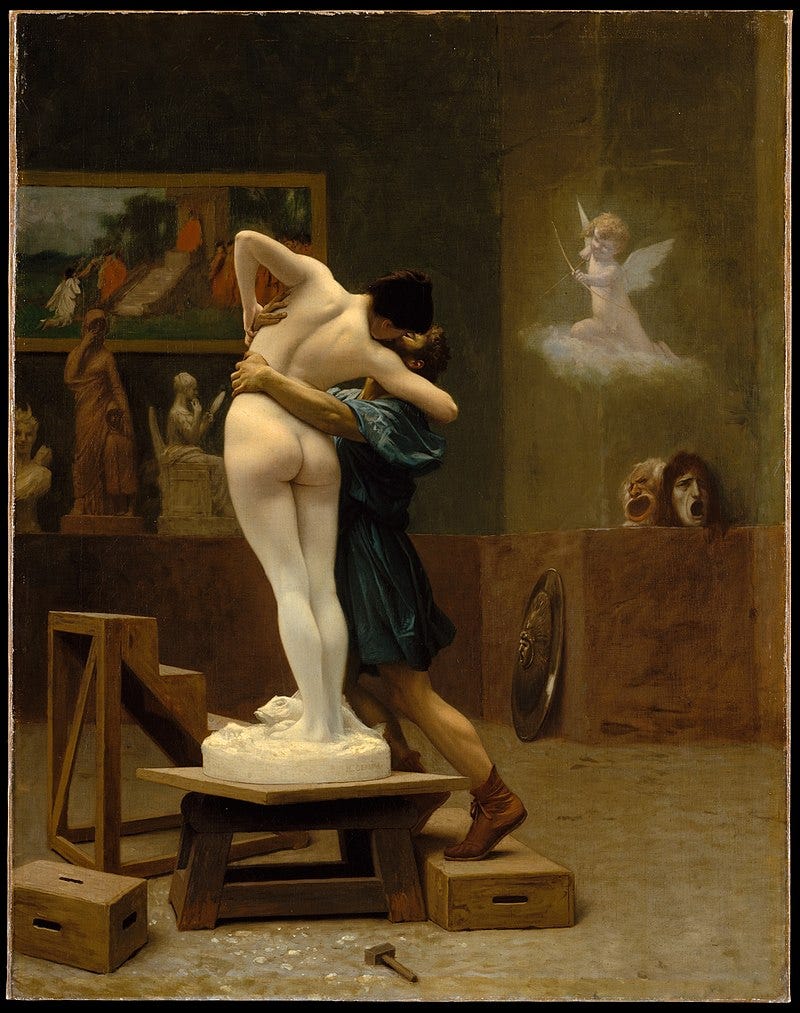

"Pygmalion and Galatea" is also the subject/name of perhaps the most famous history painting by Jean-Léon Gérôme (1890), which I have always found to be especially naff:

The fact that we continuously attribute intelligence simply to the power of speech is perhaps the most interesting thing to be gleaned from studies surrounding AI. Human beings have always ascribed power to the miracle of naming, in an aside in Moses and Monotheism, Sigmund Freud wrote of how, before the written word, it was synonymous with magic, as our ancestors marvelled at their own invention: “All magic, the predecessor of science, is basically founded on these premises. All magic of words belongs here, as does the conviction of the power connected with the knowledge and the pronouncing of a name. We surmise that " omnipotence of thoughts " was the expression of the pride man kind took in the development of language, which had brought in its train such an extraordinary increase in the intellectual faculties.”11 And it seems that this same sense of wonder persists as we continuously conflate speech with consciousness. While the question of who is allowed to speak is far from resolved, as famously contended by Gayatri Chakravorty Spivak in “Can the Subaltern Speak?”.

The traditions of the current major religions all arose around the time of the invention of the written word, the Phoenecian or Proto-Hebrew alphabet, and Sanskrit (Judaism from which Christianity and Islam were derived; and Hinduism, from which Buddhism is derived). In Becoming Beside Ourselves: The Alphabet, Ghosts and Distributed Being mathematician and philosopher Brian Rotman postulated that the literalism we are currently subject to, particularly where religious extremism is concerned, is a reaction against new media, which threatens to supplant the written word. Rotman thinks that it is the last days of “alphabetism”. It is an interesting idea, that has resonated with me particularly over the past two years, as publications (especially once-trusted outlets such as the BBC and the New York Times) have sought to overwrite the overwhelming physical evidence of genocide. Even the least “political” of people are now looking at these attempts for how insane they seem, and the power dynamic is dramatically shifting. The blatant immorality of these times is enough to make even the hardened atheist desirous of the moral framework of religion in place of what seems to have become a series of death cults.

A capacity to read critically has become a moral imperative. At the same time, AI is further degrading literacy because students need good marks more than they need to learn writing skills in this economy. These metrics are now all that is important, getting into outrageously expensive tertiary programs that offer ever less of an education, but which distinguish their graduates as suitably obedient to be afforded well-remunerated jobs. Having a knowledge of how to decipher texts, to construct an argument, or even a grounding in history, are things that are not only no longer socially necessary but almost dangerous to cultivate. (I am pretty good at these things, have taught them at a University level, and I now work in a shop, lol.) One could argue that a willingness to cheat in the sense of generating rather than writing an essay is probably demonstrating the skills necessary to get ahead in this system. But on the other side you will have nothing, not even your own thoughts. Never mind, also, that no one in the history of the world has needed to read an undergraduate essay. That millions have been employed to do so in the service of the development of student writers belongs to a past where cultivation was seen as a good thing, and not somehow antidemocratic. These enforced hierarchies are no longer even producing diminished returns, but net losses, on every conceivable front. Technology has reached the point where it offers only solutions for problems that ought not exist.

The constant denigration of our capacity to read in a broader sense, to be visually literate is thus part of the assault on our capacity to resist. We are now being bombarded with the gaslighting of an ailing Empire that seems to all-but-convince a lot of people that the babies we see being murdered everyday are nothing more than images because images can never mean as much as their magical words. We are all in a state of dissociation and dissonance where the reality is too awful to process, collectively retreating to deeply aestheticised fantasy worlds constructed by a cynical mass-media, broadly acknowledged to be struggling to even make pale imitations of the cultural output of the mid-to-late-20th-century. The public and perhaps especially the contemporary artist has additionally become functionally visually illiterate as a result of this (probably) psychologically necessary suppression. But the truth, or some base psychological approximation of it, is as necessary to literacy as it is to good art (which I mean in the broader popular sense as well as “high” art). In the aforementioned interview Thiel is asked if he is a Calvinist (to his obvious annoyance), and his religion is certainly important, but not because any system of belief is superior, but where the world is still being run by the same people who created such gross inequality and their ideology has barely shifted. In a recent interview he speaks of creating a system of total surveillance to prevent “the antichrist”. Apparently this involves supplying Israel with technology to help in its “war effort”. My grandfather gave up his very culturally important (Irish) Catholicism to marry my Protestant grandmother and they will always be among the best people I can think of, which they would call “Christian”, which I suppose had something to do with their interpretation of the text. As Max Weber famously demonstrated, the “Protestant Ethic” was also to exemplify the “Spirit of Capitalism”.12 The denial of the evidence of our own eyes is also oddly reminiscent of Foundational Protestant Theologian John Calvin’s insistence that hell can not be equated with anything like human sensory experience. And yet we see images of children in flames and may well wonder how it can be anything else.

1 Ovid, Metamorphoses, Translated by Charles Martin, Norton Editions: New Yorl , 2004, p.290

3 Walter J. Ong. Orality and Literacy. Taylor and Francis, 2013. https://doi.org/10.4324/9780203426258.

4 Karen Hao, Empire of AI: Dreams and Nightmares in Sam Altman’s Open AI, Penguin: New York, 2025.

5 Ibid, 91

6 https://conversationswithtyler.com/episodes/peter-thiel-political-theology/

7 https://fortune.com/2025/08/15/ai-gutting-next-generation-of-talent/

8 https://economictimes.indiatimes.com/news/international/us/ai-return-on-investment-30-billion-down-the-drain-mit-says-95-of-companies-see-no-returns-from-generative-ai-latest-news/articleshow/123440305.cms

9 https://www.forbes.com/sites/victoriafeng/2025/08/06/fear-of-super-intelligent-ai-is-driving-harvard-and-mit-students-to-drop-out/

10 Hao, ibid, 96

11 Sigmund Freud, Moses and Monotheism, Trans. Katherine Jones, Hogarth Press, 1939, p.179

12 Weber, Max, Sebastián G Guzmán, and James Hill. Max Weber’s The Protestant Ethic and the Spirit of Capitalism. 1st ed. Routledge, 2007. https://doi.org/10.4324/9781912282708.